Humans spend today most of their time indoors and they need location based facilities, especially in public buildings. However, a large amount of indoor space is unmapped. An innovative solution for this issue is to exploit the data that is already available, coming from mobile devices. Despite being indispensable tools for everyday life, they are a meaningful source of information supported by powerful sensors and increasing computational power. In this way, ComNSense project has the purpose of using the mobile data coming from the crowd, for automatically mapping indoor environments. Therefore, it is assumed that mobile device users are walking through public indoor environments and send the acquired data to a server for modeling the 3D space. The server has also to send quality indictors back to the users and to direct them to the locations where the obtained model is suffering of a lack of information.

Considering that the input data is coming from different sources and that it may be noisy and incomplete, it is meant to develop, on the server, a robust algorithm which makes use of an interior grammar capable to describe 3D structures and semantic information. An important feature of the grammar should be its capability of learning and predicting the semantic information, which will help in determining the purpose of the specific space.

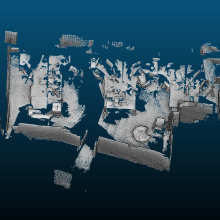

Knowing that most of the public buildings contain spaces with poor texture, first investigations with a depth sensor, Google Project Tango, were done. The resulted point cloud was passed through a pre-processing pipeline for removing the noise and obtaining a suitable input format for the interior grammar. A sample of a point cloud acquired with Project Tango and the resulted orthographic image can be observed in (Figure 1).

Volker Walter

Dr.-Ing.Head of Research Group Geoinformatics