Konstantin Hoppe

Fusion of point clouds from Photogrammetry and LiDAR with point-wise quality values

Duration of the Thesis: 6 months

Completion: May 2019

Supervisor: Dr. Konrad Wenzel, M.A. Tobias Hauck (nFrames)

Examiner: Prof. Dr.-Ing. Norbert Haala

Introduction

The fusion of point clouds from photogrammetry and LiDAR using point-wise quality

measures is an approach that has the potential to produce very accurate results. By

determining the quality of each point, problems that automatically arise when thinking about fusion of data points originating from different sensors can be resolved. As an example the different sampling of LiDAR and multi-view stereo points in the context of detail preservation can be given: Between two LiDAR points typically multiple multi-view stereo points exist due to the typically higher sampling. E.g. to give LiDAR points enough weight when being fused with DIM points, filtering of the dense point cloud is a precondition. Goal of this thesis is to review an existing and define an improved geometrical quality measure in a multi-view stereo environment. This approach wants to set the precondition for an intelligent fusion of point clouds of LiDAR and multi-view stereo.

Methodology

Goal of this thesis was to introduce a local point-wise quality measure for multi-view stereo to be able to determine the precision of each point. Therefore a geometrical precision measure presented in [Rothermel 2012], the depth random error σ_Depth, has been introduced as a local point-wise quality measure. As the local point-wise quality measure should be comparable to point-wise quality measures of other data acquisition methods like LiDAR, it is defined in the object space using a projection of an assumed disparity random error being projected onto the base image ray. σDepth hereby is dependent on two coherences: the base-to-height-ratio that determines the intersection angle between stereo models, as well as the image scale that determines the GSD. To evaluate the behaviour of σDepth and prove its reliability, a precision measure σplaneFit for multi-view stereo point clouds has been introduced that can be used to determine the precision for flat, horizontal surfaces. As no ground truth data has been given, this design decision has been made to use the knowledge that certain areas are flat. Thus fitting a plane and computing the standard deviation for each point of the dense point cloud respectively has proven itself as a reliable quality measure. However, the direct comparability of the two measures σDepth and σplaneFit was not given, as the precision of point clouds not only depends on the geometrical configuration, but also on texture quality, which has been proven within this thesis by evaluating same textures with different illuminations and therefore different texture quality. Consequently a texture quality measure t has been developed. This texture quality measure is basically a tuned high-pass image filter and allows the determination of a texture-dependent point-wise quality measure.

Results

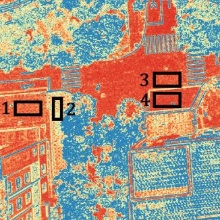

Evaluating σDepth using the two additionally defined measures σplaneFit and t led to

various results. Firstly, the expected behaviour of σDepth within different geometrical

configurations like nadir and oblique and differing stereo models has been confirmed. Secondly, the clear coherence between σDepth and σplaneFit has been proven: If the precision of the single view stereo point cloud doubles (σplaneFit halves), the geometrical depth precision doubles (σDepth halves) too.

For the further quantitative evaluation of the defined measures four datasets of different image acquisition techniques have been chosen: aerial, UAV and close range. Therein multiple areas have been evaluated, which lead to further results. It has been confirmed that a lower texture quality induces a lower precision in the point cloud, whereas a higher texture quality measure induces a higher precision. Also, the behaviour of a continuously decreasing σplaneFit for an increasing texture quality is similar between the datasets. However, the absolute precision is not within the same range. Due to the scaling effect of σDepth, indeed comparability between the datasets can be reached: It has been shown that σDepth scales σplaneFit of each dataset to a similar range. Consequently a dataset independent transfer function x(t) could be found that is robust against changes within the geometrical configuration, as they get compensated by a respective change within the precision of the point cloud. For the tested datasets, x(t) is dataset independent.

Conclusions

Within the thesis a geometrical local point-wise quality measure has been introduced based on [Rothermel, 2012] and evaluated. As the quality measure considers both image scale and base-to-height-ratio, the quality measure already leads to significant improvements within the filtering of DIM point clouds. A plane fit in flat, horizontal areas has been proved to serve as a reasonable reference within this thesis. As the precision of point clouds also depends on texture quality, a texture quality measure has been determined by using a high-pass image filter. Eventually a texture-dependent model has been empirically approximated through regression, which serves as a quality measure for multi-view stereo and can be applied to effectively filter DIM point clouds.

References

[Rothermel, 2012] Mathias Rothermel. Development of a SGM-Based Multi-View Reconstruction Framework for Aerial Imagery. Verlag der Bayerischen Akademie der Wissenschaften, München, 2016. ISBN 978-3-7696-5204-8.

Ansprechpartner

Norbert Haala

apl. Prof. Dr.-Ing.Stellvertretender Institutsleiter